The development of generative systems such as generative adversarial networks (GAN) has been remarkable, and it has become possible to generate high-quality images to the extent that it is said that “the era of photographs as evidence is over.” .

In this article, I would like to introduce a unique generation system called HumanGAN [1].

If you want to know the mechanism of GAN in the first place, please refer to the following article.

Contents

- What is HumanGAN

- Advantages of HumanGANs

- Disadvantages of HumanGAN

- Learning HumanGANs

- HumanGAN output result

- For those who want to learn more

1. What is HumanGAN

HumanGAN is a new learning method proposed by Fujii et al.[1].

HumanGAN, as the name implies, is a GAN that involves humans, and in this GAN, humans play the role of discriminator.

Figure 1 shows the difference between regular GAN and HumanGAN. As far as I know, there is no other GAN that learns interactively with humans like HumanGAN.

By the way, what are the advantages of having a human being in charge of the discriminator?

2. Advantages of HumanGAN

Fujii et al. cites the following two points as advantages for humans to play the discriminator .

- Humans have higher discriminative ability than discriminators in the early stages of learning

- Humans can intuitively determine the distribution of correct data

In GAN, if the discrimination performance of the Discriminator is low, the quality of the data generated by the Generator (= generator) will naturally decrease. Humans have the ability to avoid being deceived by Generators in the early stages of learning.

Regarding the second point, “Humans can intuitively determine the distribution of correct data”, let’s consider a Generator that generates speech as an example.

First, we consider learning in a normal GAN framework without human intervention. It collects a lot of human conversations as correct data and starts learning. Suppose that, in the course of training, the Generator produces speech that looks like it was synthesized by a machine. If the Discriminator can learn well, this voice will be judged as fake, so the Generator will gradually stop generating such voice.

What is important here is that the generator will continue to learn so that it can generate data that is in the distribution of the correct data . As the learning progresses, data outside the distribution of the correct data will not be generated.

Next, consider the case where a human being is in charge of the discriminator. Correct answer data is not required. Suppose that a generator in the middle of learning generates speech that looks like it was synthesized by a machine.

The human responds to the Generator that it sounds audible if he wants to accept the synthesized speech as speech, or that it does not sound audible if he does not want to accept it. Continuing in this way, the Generator will produce speech recognized by the human being responsible for the Discriminator.

In other words, humans can intuitively determine the distribution of correct data . If you want to recognize a slightly machine-synthesized voice as voice, you can also have it generated. I can do it.

3. Disadvantages of HumanGAN

HumanGAN has its drawbacks, of course. The biggest drawback is that it takes time to learn . This is because we have to wait for a human response, and machine power is not enough.

In [1], crowdsourcing is used to collect people and proceed with learning, but it is still difficult to learn a generator using a large-scale network, and a generator with a simple structure is used.

4. Learning HumanGAN

If you are familiar with how regular GANs learn, you may have wondered how HumanGANs learn.

In a normal GAN, the gradient calculated using the discriminator’s output is passed to the generator by backpropagation. The error backpropagation method is a learning method for multi-layered hierarchical neural networks, and is a learning method in which when certain information is given to the input layer, the output layer must output certain information corresponding to it.

If the Discriminator is human, the output results cannot be communicated to the Generator and learning cannot proceed.

Therefore, HumanGAN uses a method called Natural Evolution Strategies to convey the results of the Discriminator to the Generator. Natural Evolution Strategies is a method of finding gradients using data perturbations, and in short, it can be said that it is a method of approximating gradients of black-boxed systems. Intuitively, it’s close to numerical differentiation, so imagine something like that. This learning method is one of the big contributions of HumanGAN.

5. HumanGAN output result

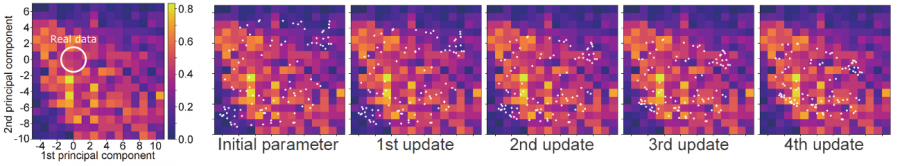

According to [1], the purpose of HumanGAN is to be able to generate a wider range of data than the data obtained from reality. Figure 2 shows that the speech data generated by HumanGAN produces a wider range of data than the actual speech data.

First, look at the diagram on the left. This is the result of a subject experiment.

The X and Y axes represent the first and second principal components after dimensionality reduction of the data used in the subject experiment. The colors in the colormap range from 0-1, with values closer to 1 indicating that subjects judged the data to be natural. Of the data judged by the subjects, only the data in the white circles were actually voices.

From this, we can see that people perceive a wider range of natural speech than the actual speech data.

The remaining five figures are the leftmost figure plotted with white dots. White dots represent data generated by HumanGAN. As learning progresses, the white dots are getting closer to the white circles, but they are not all gathered inside the white circles.

From this, you can see that a wider range of data can be generated than the actual voice data.

6. For those who want to learn more

In this article, we introduced HumanGAN. Skill Up AI is currently offering a GAN (hostile generation network) course . In this course, you can learn various GANs. There is also a free trial that allows you to watch part of the course, so please consider it. If you want to learn GAN from the basics of deep learning, please consider the deep learning basic course that can be used in the field .