Google Analytics allows you to add various customizations to your tracking code. Although the degree of freedom is high, there are few opportunities to know what other people and other sites are implementing. However, the only way to know is by looking at the beacons that are actually being sent to Google Analytics.

In this article, I would like to introduce how I installed a self-made extension on my Google Chrome browser, stored it in BigQuery, and analyzed the measurement data.

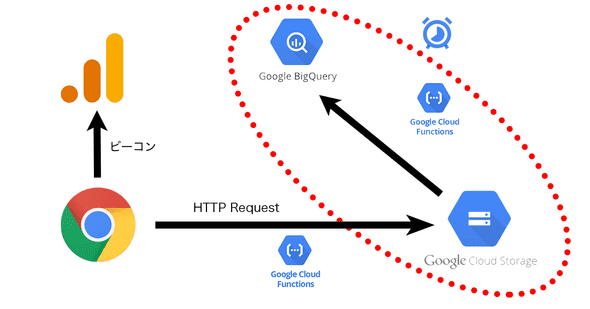

Measurement mechanism and system configuration

overview

This time, I developed an extension for Google Chrome. As long as you can use the extension you developed, there is no problem, so it will not be released to the public and will only work on your own local machine. Then, when the browser sends hit information to Google Analytics, it extracts the contents of the beacon and sends it to its own database. Therefore, all hit information of Google Analytics implemented on the site viewed by the browser installed with the extension is stored in the database managed by itself.

Implementing extensions

Google Chrome extensions are implemented as extensions that run in the background. The extension allows you to process the HTTP request values sent by the browser to each web server through event listeners. This function is used to process HTTP requests that correspond to universal analytics beacons. The process is simple: parse the value of each parameter sent to Google Analytics, perform primary formatting, and then send it to Google Cloud’s Cloud Functions in JSON format. Cloud Functions is a FaaS service within Google Cloud.

Considering that the data will eventually be analyzed in BigQuery, the standard parameters of Google Analytics are treated as independent columns. On the other hand, there are a huge number of parameters that can be used, and some of them are used infrequently, so some parameters are not parsed and are packed in one column as JSON data.

Server-side processing

Receive beacon information from the extension to Cloud Functions. Cloud Functions is one of the mechanisms for performing short processing on the cloud. The server used for processing is started only when called, and the server is stopped when the processing is completed. Since the usage fee varies depending on the number of processes (and the memory and CPU used, etc.), it is suitable for implementing programs that do not require constant startup and have a small number of processes.

Cloud Functions writes the received Google Analytics JSON format parameters to Google Cloud Storage as one file per request. Since this file is created for the number of hits, the directory is divided by date and stored.

We are exporting log files created per hit to BigQuery in daily batches. This export process also uses Cloud Functions. Cloud Functions downloads data for one day from Cloud Storage, combines them in JSON format, and loads them into BigQuery. I also use the recently launched “Cloud Scheduler”, one of the services within Google Cloud, to run these operations once a day.

Image diagram of these mechanisms

The contents of the above are as follows.

Summary of measured data

The number of page views sent, the number of domains visited, the number of destination properties, etc.

Since this implementation on February 8, 2019, all Google Analytics beacons sent by pages viewed in my Google Chrome have been left in my BigQuery.

By executing a SQL query against that BigQuery,

| item name | value |

|---|---|

| total hits | 30,600 |

| total page views | 10,793 |

| total number of events | 18,724 |

| number of domains | 550 |

| Number of tracking IDs | 661 |

You can get data like

Customized beacon example

Information on the number of hits, domains, and tracking IDs is also interesting, but what I would like to introduce in this blog is to analyze the customized content of Google Analytics implemented on many of the above sites.

As many of you reading this blog may already know, Google Analytics can be customized in many ways. Many of these customizations involve embedding customization results in Google Analytics beacons. Here are some customization examples I found in my BigQuery store.

custom dimension

For custom dimensions, the dimension name cannot be determined from the beacon content, so it must be inferred from the set value. I’ve tried to list a few that I can think of.

- Google Tag Manager container ID

- Article author name

- Membership type

- client ID

- Login/Logout

- Time stamp

- breadcrumbs

- page type

- Product name

- Referrer

- Query parameters (collectively stored in one dimension)

- Other GA Tracking IDs installed on the same page

- Product attribute

- gclid

- Search condition (a format that packs everything into one dimension)

While there are many safe ones, there are some sites that include custom dimensions such as “Google Tag Manager container ID”, “other GA tracking IDs installed on the same page”, and “query parameters”. did.

event tracking

In our data, we were able to confirm at least one event tracking in 110 of the 550 measurement domains. Also, I think that the two main ways of event tracking are “click event” and “scroll event”. There were 36 sites where click events were measured, and 20 sites where scroll events were measured. Also, depending on the site, there seems to be some flaws in the implementation, such as event actions and event labels, which are “undefined”.

Also, it seems that a lot of event tracking is installed on the Google domain, especially on the Google Analytics site. In fact, of the 1,400 event patterns measured this time, about 350 patterns were from Google Analytics.

It can be seen that each company is devising event tracking even for data other than Google domain data. Among them, I will introduce one of the event tracking that I thought “this is interesting”.

| item name | set value |

|---|---|

| event category | Cross Domain Tracking |

| event action | www.example.com > www.example.net |

| event label | www.example.com/some1/ > www.example.net/some2/ |

It seems that when you click on a cross-domain tracking link, the source and destination information of the cross-domain is sent by event tracking. It’s unclear what the specific implementation is, but for sites that manage a large number of domains and need cross-domain tracking, it seems to be useful when solving cross-domain problems.

custom campaign

Only 29 of the measured hits had a custom campaign set. It may be the reason why most of the ads are not displayed because I usually put in an ad blocker.

But there are a few things you can learn just by looking at 29 values with custom campaigns. For example, Google Analytics shows “cpc”, “cpv”, “display”, “email”, “social”, “affiliate”, etc. as recommended values to be included in “Media”. However, few sites set the value according to the recommended value.

In fact, the URL set for a page in Google Webmaster Tools is:

Also, the URL set for a page on Google’s marketing platform is as follows.

In the sense that the settings of the default channel group can be used as they are, it seems good to use the recommended values.

summary

This time, I used a Google Chrome extension I developed for myself to consider the customization of Google Analytics implemented on various sites. I don’t think you have much chance to see Google Analytics implemented by other people. However, using the technique presented here, I was able to see beacons on various sites, albeit limited to the hits I sent. At the moment, only 2 months worth of data is covered, but I would like to report when the amount of data increases in the future.